If you’ve ever tried writing multithreaded code in C or C++, you know the pain. Data races, segfaults at 2 AM, bugs that only show up in production. Rust takes a fundamentally different approach. Its ownership system and type checker catch concurrency bugs at compile time, long before they reach your users.

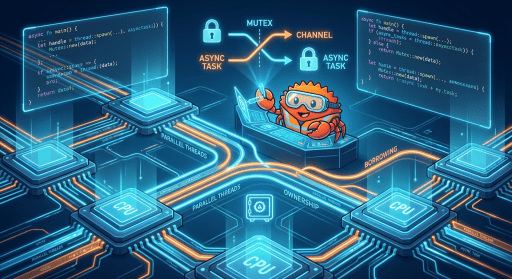

This article walks through everything you need to know about concurrency and parallelism in Rust. We’ll cover threads, shared state, message passing, async/await, and the magical Send and Sync traits that make Rust’s safety guarantees possible.

Concurrency vs Parallelism: What’s the Difference?

Before writing any code, it’s worth getting the terminology straight. These two words get tossed around interchangeably, but they mean different things.

Concurrency is about dealing with multiple tasks at once. It’s a way to structure a program so it can switch between different tasks, making progress on each. These tasks might not all be executing at the exact same instant, especially on a single-core processor, but they overlap in time. Think of a chef juggling multiple orders in a kitchen: they switch between chopping vegetables for one order, checking the oven for another, and plating a third. They are concurrently handling multiple orders.

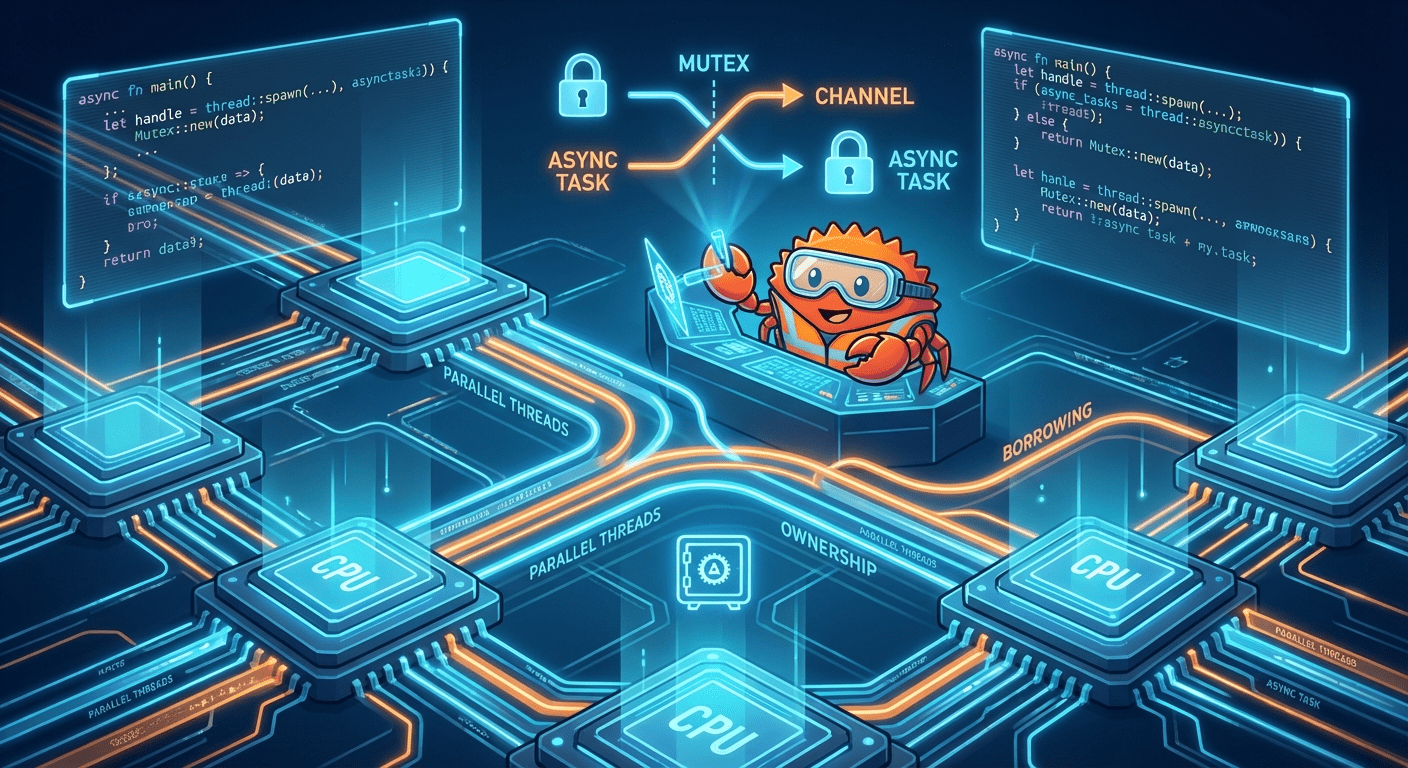

Parallelism is about doing multiple tasks at the exact same time. This requires hardware with multiple processing units, such as multi-core CPUs. If our chef had two assistant chefs, they could chop vegetables, stir a sauce, and bake a dish all at once — in parallel.

In Rust, you can write code that is concurrent (structured to handle multiple tasks) and, when running on multi-core hardware, that code can also execute in parallel. The language gives you tools for both.

Why Does Concurrency Matter?

There are two big reasons concurrency is essential in modern software.

For applications with UIs — whether desktop GUIs or web servers — concurrency is vital for responsiveness. Imagine a desktop application that needs to perform a long calculation. If it does this on its main thread without concurrency, the entire UI freezes until the calculation is done. By running the calculation on a separate thread, the main thread stays free to respond to user input.

Programs also frequently wait for I/O operations: reading files, making network requests, querying databases. Concurrency allows a program to start an I/O operation and then switch to another task while waiting, rather than sitting idle. This is the foundation of efficient network services and applications dealing with many external resources.

The Classic Challenges: Race Conditions and Deadlocks

Concurrency isn’t free. It introduces two notorious categories of bugs.

A race condition occurs when two or more threads access shared data concurrently, and at least one of them modifies the data. The final outcome depends on the unpredictable order in which the threads execute. For example, if two threads try to increment a shared counter (counter += 1) without proper synchronization, both might read the same initial value, both increment it, and both write back the same new value — effectively losing one of the increments.

A deadlock happens when two or more threads are blocked forever, each waiting for the other to release a resource. Thread A has locked Resource X and is waiting for Resource Y, while Thread B has locked Resource Y and is waiting for Resource X. Neither can proceed, and the program grinds to a halt.

These issues arise because of the complexities of managing shared mutable state and synchronizing access between threads. Rust’s ownership model eliminates many of these problems at compile time, but understanding them is still critical for writing correct concurrent code.

Creating and Managing Threads

The primary way to create a new thread in Rust is by calling std::thread::spawn. This function takes a closure as an argument, and that closure contains the code the new thread will execute. It returns a JoinHandle, which you can use later to wait for the thread to finish.

Let’s look at a basic example:

use std::thread;

use std::time::Duration;

fn main() {

println!("Main thread: Starting up!");

let handle = thread::spawn(|| {

for i in 1..=5 {

println!("New thread: count {}", i);

thread::sleep(Duration::from_millis(500));

}

println!("New thread: I'm done!");

});

for i in 1..=3 {

println!("Main thread: working... {}", i);

thread::sleep(Duration::from_millis(300));

}

println!("Main thread: Waiting for the new thread to finish...");

handle.join().unwrap();

}

There’s a lot happening here, so let’s break it down piece by piece.

The call to thread::spawn starts a new operating system thread immediately. The closure we pass in — the block between || { and } — is the code that runs on that new thread. In this case, it counts from 1 to 5, pausing half a second between each count.

Meanwhile, the main thread doesn’t wait around. It continues executing its own loop right away, counting from 1 to 3 with 300-millisecond pauses. This is concurrency in action: both threads are making progress, interleaving their output in an unpredictable order depending on how the OS schedules them.

The handle variable holds a JoinHandle. When we call handle.join().unwrap() at the end, the main thread blocks and waits for the spawned thread to finish. Without this line, the main thread might exit before the spawned thread completes its work, and the spawned thread would be killed. The join method returns a Result — it gives you Ok(value) if the thread completed successfully, or Err(error) if the thread panicked.

Moving Data Into Threads with move Closures

What happens when a spawned thread needs access to data from the parent thread? If the closure tries to capture a variable by reference, the compiler will reject it. Why? Because Rust can’t guarantee that the reference will remain valid for the entire lifetime of the new thread. The parent thread might drop the data before the child thread is done using it.

The solution is the move keyword. A move closure takes ownership of the variables it captures:

use std::thread;

fn main() {

let message = String::from("Hello from the main thread!");

let important_number = 42;

let handle = thread::spawn(move || {

println!("Spawned thread received message: '{}'", message);

println!("Spawned thread received number: {}", important_number);

});

// This would cause a compile error:

// println!("Main thread still has message: {}", message);

handle.join().unwrap();

}

The move keyword before the closure forces it to take ownership of both message and important_number. Once the closure takes ownership, the original variables in main are no longer accessible. If you uncomment that last println!, the compiler will give you a clear error: “value borrowed here after move.”

For String, which doesn’t implement Copy, ownership is truly transferred — the main thread can no longer use it. For i32, which does implement Copy, a copy of the value is moved into the closure, so the original would technically still be usable. But the move keyword applies uniformly to all captured variables.

This is Rust’s ownership system working hand-in-hand with its concurrency model. Instead of sharing mutable references unsafely, you transfer ownership into the new thread. The compiler enforces this at compile time, making data races structurally impossible.

Handling Thread Return Values and Panics

Spawned threads can return values, and they can also panic. The JoinHandle::join() method handles both cases through its Result return type. If the thread completes successfully, join() returns Ok(value). If the thread panics, join() returns Err(error).

Here’s an example that demonstrates both scenarios:

use std::thread;

use std::time::Duration;

fn main() {

let worker_handle = thread::spawn(|| {

println!("Worker (Success): Starting computation...");

thread::sleep(Duration::from_secs(1));

println!("Worker (Success): Computation finished.");

42 // Return value

});

let panicking_handle = thread::spawn(|| {

println!("Worker (Panic): I'm about to panic!");

panic!("The worker thread has panicked!");

});

match worker_handle.join() {

Ok(result) => println!("Main: Successful worker returned: {}", result),

Err(_) => println!("Main: Successful worker panicked unexpectedly!"),

}

match panicking_handle.join() {

Ok(_) => println!("Main: Panicking worker somehow succeeded?"),

Err(e) => println!("Main: Panicking worker failed as expected: {:?}", e),

}

println!("Main: Program continues running.");

}

The first thread does some simulated work and returns the value 42. When we call join() on its handle, we get Ok(42), and we can use that value however we like.

The second thread calls panic!(). This terminates that specific worker thread, but not the main thread. When the main thread calls join() on the panicking handle, the panic is “caught,” and join() returns an Err. Our match statement handles this gracefully, logging the error and continuing execution.

This isolation of panics is a key safety feature of Rust’s concurrency model. By default, a panic in one thread does not bring down the entire program (unless it’s the main thread). This is why you should prefer using match to handle the Result from join() in production code. Using .unwrap() is just a shortcut that says “if this thread panics, I want my main thread to panic too” — which is often not the robust behavior you want.

Shared State with Arc<T>: Thread-Safe Shared Ownership

When multiple threads need to read the same data, you need a way to share ownership across thread boundaries. That’s what Arc<T> (Atomic Reference Counted) is for. It works like Rc<T>, but uses atomic operations to manage its reference count, making it safe to use across threads.

use std::sync::Arc;

use std::thread;

struct ImportantConfig {

api_url: String,

max_retries: u32,

}

fn main() {

let config = Arc::new(ImportantConfig {

api_url: "https://api.example.com/data".to_string(),

max_retries: 5,

});

let mut handles = vec![];

for i in 0..3 {

let config_clone = Arc::clone(&config);

let handle = thread::spawn(move || {

println!(

"Thread {}: Using API URL '{}' with max retries {}",

i, config_clone.api_url, config_clone.max_retries

);

});

handles.push(handle);

}

for handle in handles {

handle.join().unwrap();

}

}

Before spawning each thread, we call Arc::clone(&config). This doesn’t deep-copy the ImportantConfig data. Instead, it creates a new pointer to the same underlying data and atomically increments the reference count. The cloned Arc is then moved into the closure for the new thread.

Each thread can safely read from its config_clone because Arc<T> ensures the data lives as long as at least one Arc points to it. The data is only dropped when the last Arc pointing to it is dropped.

The key difference between Arc<T> and Rc<T> is that Arc<T> uses atomic operations — special CPU instructions that guarantee updates to the reference count are indivisible and cannot be interrupted by other threads. This prevents data races on the count itself, but it comes with a small performance cost compared to Rc<T>.

By default, Arc<T> provides shared, read-only access. If you try to get a &mut T from an Arc<T>, the compiler won’t let you. For mutable shared state, you need to combine Arc<T> with a synchronization primitive like Mutex<T>.

Mutex<T>: Mutual Exclusion for Mutable Shared Data

Arc<T> solves shared ownership, but what if multiple threads need to mutate the shared data? That’s where Mutex<T> comes in. A Mutex<T> ensures that only one thread can access the data it protects at any given time. To access the data, a thread must first acquire the “lock” on the mutex.

Here’s the classic shared counter example:

use std::sync::{Arc, Mutex};

use std::thread;

fn main() {

let counter = Arc::new(Mutex::new(0u32));

let mut handles = vec![];

for i in 0..5 {

let counter_clone = Arc::clone(&counter);

let handle = thread::spawn(move || {

for _ in 0..10 {

let mut num_guard = counter_clone.lock().unwrap();

*num_guard += 1;

}

println!("Thread {}: Finished incrementing.", i);

});

handles.push(handle);

}

for handle in handles {

handle.join().unwrap();

}

let final_value = *counter.lock().unwrap();

println!("Final counter value = {}", final_value); // Expected: 50

}

Let’s trace through what’s happening. We create a Mutex<u32> initialized to 0, then wrap it in an Arc so multiple threads can share ownership of it. We spawn 5 threads, each of which increments the counter 10 times.

Inside each thread, counter_clone.lock().unwrap() attempts to acquire the lock. If another thread currently holds the lock, this call blocks — the thread waits until the lock becomes available. Once acquired, lock() returns a MutexGuard, which implements Deref and DerefMut, giving us mutable access to the inner u32.

The line *num_guard += 1 dereferences the guard and increments the value. When num_guard goes out of scope at the end of the loop iteration, the lock is released automatically. This is Rust’s RAII pattern at work — resource cleanup tied to scope, not manual calls.

After all threads finish, we lock the mutex one more time in the main thread to read the final value. With 5 threads each incrementing 10 times, we reliably get 50. No race conditions, no lost updates, guaranteed by the type system.

Deadlocks: What Mutex Doesn’t Prevent

While Mutex<T> prevents data races, it introduces the possibility of deadlocks. A deadlock occurs if threads try to acquire multiple locks in different orders:

- Thread A locks Mutex 1, then tries to lock Mutex 2.

- Thread B locks Mutex 2, then tries to lock Mutex 1.

If both threads acquire their first lock and then block waiting for the second, they will wait forever.

Rust doesn’t prevent deadlocks at compile time — it’s a complex runtime problem. But its ownership system and explicit locking help you reason about them more clearly. The standard prevention strategy is to ensure that all threads acquire locks in a consistent global order when they need multiple locks.

RwLock<T>: Multiple Readers or One Writer

Sometimes the exclusive access provided by Mutex<T> is too restrictive. If you have data that is read much more often than it is written, a mutex forces readers to wait for each other unnecessarily. RwLock<T> solves this by allowing either many concurrent readers or one exclusive writer.

use std::sync::{Arc, RwLock};

use std::thread;

use std::time::Duration;

use std::collections::HashMap;

fn main() {

let cache: Arc<RwLock<HashMap<String, String>>> =

Arc::new(RwLock::new(HashMap::new()));

let mut handles = vec![];

// Writer thread

let cache_writer = Arc::clone(&cache);

let writer_handle = thread::spawn(move || {

let mut guard = cache_writer.write().unwrap();

println!("Writer: Acquired write lock. Populating cache...");

guard.insert("url1".to_string(), "Data for URL1".to_string());

guard.insert("url2".to_string(), "Data for URL2".to_string());

thread::sleep(Duration::from_millis(100));

println!("Writer: Cache populated. Releasing write lock.");

});

handles.push(writer_handle);

// Reader threads

for i in 0..3 {

let cache_reader = Arc::clone(&cache);

let reader_handle = thread::spawn(move || {

thread::sleep(Duration::from_millis(150 + 20 * i as u64));

let guard = cache_reader.read().unwrap();

println!("Reader {}: Acquired read lock.", i);

if let Some(data) = guard.get("url1") {

println!("Reader {}: Found url1: '{}'", i, data);

}

});

handles.push(reader_handle);

}

for handle in handles {

handle.join().unwrap();

}

}

The writer thread calls cache_writer.write().unwrap() to acquire an exclusive write lock. While this lock is held, no other thread — reader or writer — can access the data. The writer inserts entries into the HashMap, and the lock is released when the guard goes out of scope.

Each reader thread calls cache_reader.read().unwrap() to acquire a shared read lock. Multiple readers can hold read locks simultaneously, which is the whole point. They only block if a writer currently holds the write lock.

This pattern is ideal for caches, configuration stores, and any data structure where reads vastly outnumber writes. The RwLock lets you maximize read throughput while still ensuring writes are safe and exclusive.

Message Passing with Channels

Instead of multiple threads trying to access and modify the same piece of memory (protected by locks), message passing involves one thread sending a piece of data to another thread. Once the data is sent, the sending thread gives up ownership, and the receiving thread takes ownership. This can lead to simpler designs because you’re thinking about data flow rather than shared access patterns.

Rust’s standard library provides channels through the std::sync::mpsc module. The name stands for “multiple producer, single consumer” — many threads can send messages, but only one thread receives them.

use std::sync::mpsc;

use std::thread;

use std::time::Duration;

fn main() {

let (tx, rx) = mpsc::channel();

let tx1 = tx.clone();

let handle1 = thread::spawn(move || {

tx1.send("Alpha from Producer 1".to_string()).unwrap();

thread::sleep(Duration::from_millis(50));

tx1.send("Beta from Producer 1".to_string()).unwrap();

println!("Producer 1: All messages sent.");

});

let handle2 = thread::spawn(move || {

tx.send("Gamma from Producer 2".to_string()).unwrap();

thread::sleep(Duration::from_millis(80));

tx.send("Delta from Producer 2".to_string()).unwrap();

println!("Producer 2: All messages sent.");

});

println!("Consumer: Waiting for messages...");

for received in rx {

println!("Consumer: Received: '{}'", received);

}

println!("Consumer: Channel disconnected, all producers finished.");

handle1.join().unwrap();

handle2.join().unwrap();

}

We create a channel with mpsc::channel(), which returns a sender (tx) and a receiver (rx). To have multiple producers, we clone the sender with tx.clone(). Each clone is an independent sender that can be moved into a different thread.

Producer 1 gets tx1 (the clone), and Producer 2 gets tx (the original). Both are moved into their respective threads. Each producer sends string messages through its sender using .send().

The consumer (main thread) uses for received in rx to iterate over incoming messages. This is idiomatic Rust for channel consumption. The loop blocks when the channel is temporarily empty, waiting for the next message. It only terminates when all senders have been dropped — meaning both tx and tx1 are gone, signaling that no more messages will ever arrive.

This is a clean, ownership-driven model. Data flows in one direction, ownership transfers cleanly, and there’s no shared mutable state to worry about.

Using Channels for Coordination

Channels aren’t just for passing data. You can use them to build synchronization protocols between threads. A common pattern uses two channels: one for signaling and one for results.

use std::sync::mpsc;

use std::thread;

use std::time::Duration;

fn main() {

let (start_tx, start_rx) = mpsc::channel();

let (result_tx, result_rx) = mpsc::channel();

let worker_handle = thread::spawn(move || {

println!("Worker: Waiting for start signal...");

start_rx.recv().expect("Failed to receive start signal.");

println!("Worker: Signal received! Starting work...");

thread::sleep(Duration::from_secs(1));

let result = "Computation complete!".to_string();

result_tx.send(result).expect("Failed to send result.");

});

println!("Main: Performing setup...");

thread::sleep(Duration::from_millis(500));

println!("Main: Setup complete. Sending start signal.");

start_tx.send(()).expect("Failed to send start signal.");

let output = result_rx.recv().expect("Failed to receive result.");

println!("Main: Received from worker: '{}'", output);

worker_handle.join().unwrap();

}

The start_tx/start_rx channel lets the main thread tell the worker when it’s okay to begin. The worker calls start_rx.recv(), which blocks until the main thread sends a signal. We send () (the unit type) because we don’t need to transmit any data — it’s a pure signal.

The result_tx/result_rx channel flows in the opposite direction. After the worker finishes its computation, it sends the result back to the main thread. The main thread blocks on result_rx.recv() until the result arrives.

This two-channel pattern gives you precise control over thread coordination without any shared state or locks. The main thread can perform setup work, signal the worker when ready, and then wait for the result — all through clean ownership transfers.

The Send and Sync Traits

At the heart of Rust’s concurrency safety are two marker traits: Send and Sync. You rarely implement them manually, but the compiler checks them automatically every time you use threads.

Send means a type can be safely transferred (sent) to another thread. Most types in Rust are Send. Notable exceptions include Rc<T>, which uses non-atomic reference counting and would be unsafe to share across threads.

Sync means a type can be safely shared between threads via references. A type T is Sync if &T is Send. Types like Mutex<T> are Sync because their locking mechanism ensures safe concurrent access.

If you try to send a non-Send type to another thread, or share a non-Sync type via Arc<&T>, your code won’t compile. The compiler is preventing potential data races.

“Listen to the compiler. When it gives you errors related to Send, Sync, or lifetimes in a concurrent context, it’s usually pointing to a genuine safety issue. Don’t try to fight it with unsafe code unless you are an expert and know exactly what you’re doing. Instead, rethink your data sharing or ownership strategy.”

When you hit a Send/Sync error, the fix is almost always one of these:

- Use

Arc<T>for shared ownership across threads - Use

Mutex<T>orRwLock<T>for interior mutability of shared data - Switch to message passing with channels

Async/Await: Non-Blocking Concurrency

Threads are great, but they’re heavyweight. Each OS thread consumes memory for its stack, and context switching between thousands of threads is expensive. For I/O-heavy workloads — like web servers handling thousands of connections — async/await provides a lighter-weight alternative.

In Rust, async fn returns a Future instead of executing immediately. The .await keyword pauses execution of the current future until the awaited future completes, allowing other tasks to run in the meantime.

Here’s the simplest possible async example, using futures::executor::block_on as a minimal executor:

use futures::executor::block_on;

use std::thread;

use std::time::Duration;

async fn fetch_simulated_data(id: u32) -> String {

println!("Task {}: Starting fetch...", id);

thread::sleep(Duration::from_secs(1)); // Simulated delay

println!("Task {}: Fetch complete.", id);

format!("Data from task {}", id)

}

async fn process_tasks_sequentially() {

let result1 = fetch_simulated_data(1).await;

println!("Got: {}", result1);

let result2 = fetch_simulated_data(2).await;

println!("Got: {}", result2);

}

fn main() {

block_on(process_tasks_sequentially());

}

Calling process_tasks_sequentially() doesn’t run anything — it creates a Future. The block_on function is a simple executor that takes that Future and blocks the current thread while it runs to completion.

Inside process_tasks_sequentially, we call fetch_simulated_data(1), which also returns a Future. The .await keyword tells the executor to drive that future to completion before moving on. Then we do the same for task 2.

There’s an important caveat here: we’re using std::thread::sleep, which blocks the entire thread. In this simple block_on executor, that means nothing else can run during the sleep. To get true non-blocking behavior — where one task pausing allows another to run — you need a more advanced, multi-threaded runtime like tokio or async-std. Those runtimes replace thread::sleep with async-aware sleep functions and can multiplex many tasks onto a small pool of threads.

This example demonstrates the async/await syntax and control flow structure. It’s the absolute simplest, runnable way to show async/await in action without pulling in a large runtime.

Design Guidance: Message Passing vs Shared State

Prefer message passing for simplicity where possible. When data is sent over a channel, ownership transfers cleanly — only one thread “owns” the data at a time. This reduces lock contention, makes deadlocks less likely, and is often more intuitive than tracking which thread has locked which piece of shared memory.

Choose message passing first when:

- Tasks can be largely independent and only need to communicate results or signals

- You want to clearly define the “owner” of data at each stage of a process

- You want to avoid the complexities of fine-grained locking

Shared state with locks is sometimes necessary or more efficient, especially for data that truly needs to be accessed and modified by many threads frequently (like a shared cache). But as a general guideline, if you can model your concurrency with message passing without significant contortions, it’s often the better path. “Share memory by communicating” is a good mantra.

When you do use locks, keep critical sections short. The longer a thread holds a lock, the longer other threads have to wait. This waiting — called lock contention — can severely degrade performance in a multithreaded application. Lock, do the minimum work necessary, and unlock.

Wrapping Up

Rust’s approach to concurrency is unique in the programming language landscape. Where other languages rely on runtime checks, conventions, or prayer, Rust uses its type system and ownership model to catch concurrency bugs at compile time. The Send and Sync traits, enforced automatically by the compiler, ensure that you can’t accidentally share data unsafely between threads.

The toolkit is comprehensive: std::thread::spawn for OS threads, Arc<T> and Mutex<T> for shared mutable state, RwLock<T> for read-heavy workloads, mpsc channels for message passing, and async/await for lightweight non-blocking concurrency. Each tool has its place, and the compiler helps you choose correctly.

Listen to the compiler. When it gives you errors related to Send, Sync, or lifetimes in a concurrent context, it’s pointing to a genuine safety issue. Rethink your design, reach for the right primitive, and let Rust’s guarantees work for you.